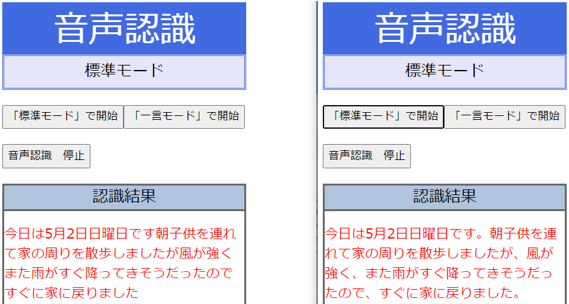

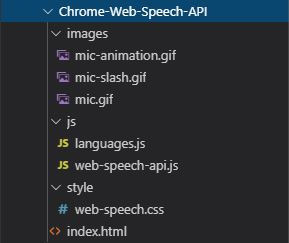

When I recently had to implement a proof of concept voice search with some of my colleagues, we came across a 2012 (!) specification for speech recognition called the Web Speech API Specification. This requires you to hit another backend for transcoding files into something that works. The recording format of the browser does not always match the prescriptions of the cloud transcribers. Although the technology is cool, setting it up can be a hassle, especially if you want to use the audio recorder provided in the browser. They will transcribe the audio within sub-second response times. For a small amount of money per transcribed audio second, you can send your audio files to Google’s Cloud Speech-to-Text API or Amazon Transcribe.

So, you go to the companies that have already managed to train their neural networks: the Googles and Amazons.

As a lone wolf or small startup, accruing that amount of data is near-impossible. To do speech recognition and synthesis you need a massive amount of training data for all kinds of languages in all kinds of settings. The biggest problem is that the easy solutions provided by libraries like SiriKit are not there for the web. Is it because people are scared to talk to their laptops, but are comfortable telling stories to their smartphones? Probably not. How many websites can you mention from the top of your head that offer you the option to search with your voice? I can only think of a handful. If we disregard native apps for a second, plain old websites (or progressive web apps) seem to severely lag behind. Native apps have a large advantage in this space, as Apple’s SiriKit and Google’s Assistant SDK can get you up and running in a few minutes to hours.įor browsers, the story is different. It can also increase conversion in your webshop, especially when customers shop on their mobile phones. Working with speech data can not only improve the accessibility of your application. resume() seem to "fix" this.With the recent upsurge of Siri, Google Assistant and Amazon’s Alexa, speech recognition and synthesis have become an increasingly important tool in the developer’s toolbox. Adding an interval every 14 seconds running.

Now I want to overcome this - if this is a bug, I will appreciate anyone directing me to the best place to report it.ĮDIT: It seem to stop after about 15 seconds. I haven't seen any documented limit to the text passed to the utterance.īTW, I've known about this since 2014 - when I was trying to add a speech feature to a browser extension I made (back then it was the TTS API available to chrome extensions - same thing happened there as well), but eventually didn't do it because of this apparent bug. speaking flag stays on(true) after it stops speaking. You can see that the SpeechSynthesis object. Have a look at this jsfiddle to see/listen: This only happens in Chrome (works well on Firefox), tested on two different computers/systems. When using the speak function in the Web Speech API, in Chrome the speaking stops abruptly after a few seconds, in the middle of the text given to it, in a seemingly random place (without reaching the end).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed